You Invested in AI. But See No Results.

Leadership made it a priority. Tools were bought, pilots ran. Yet nobody owns the AI strategy, nobody iterates the pilots, and adoption is inconsistent across teams.

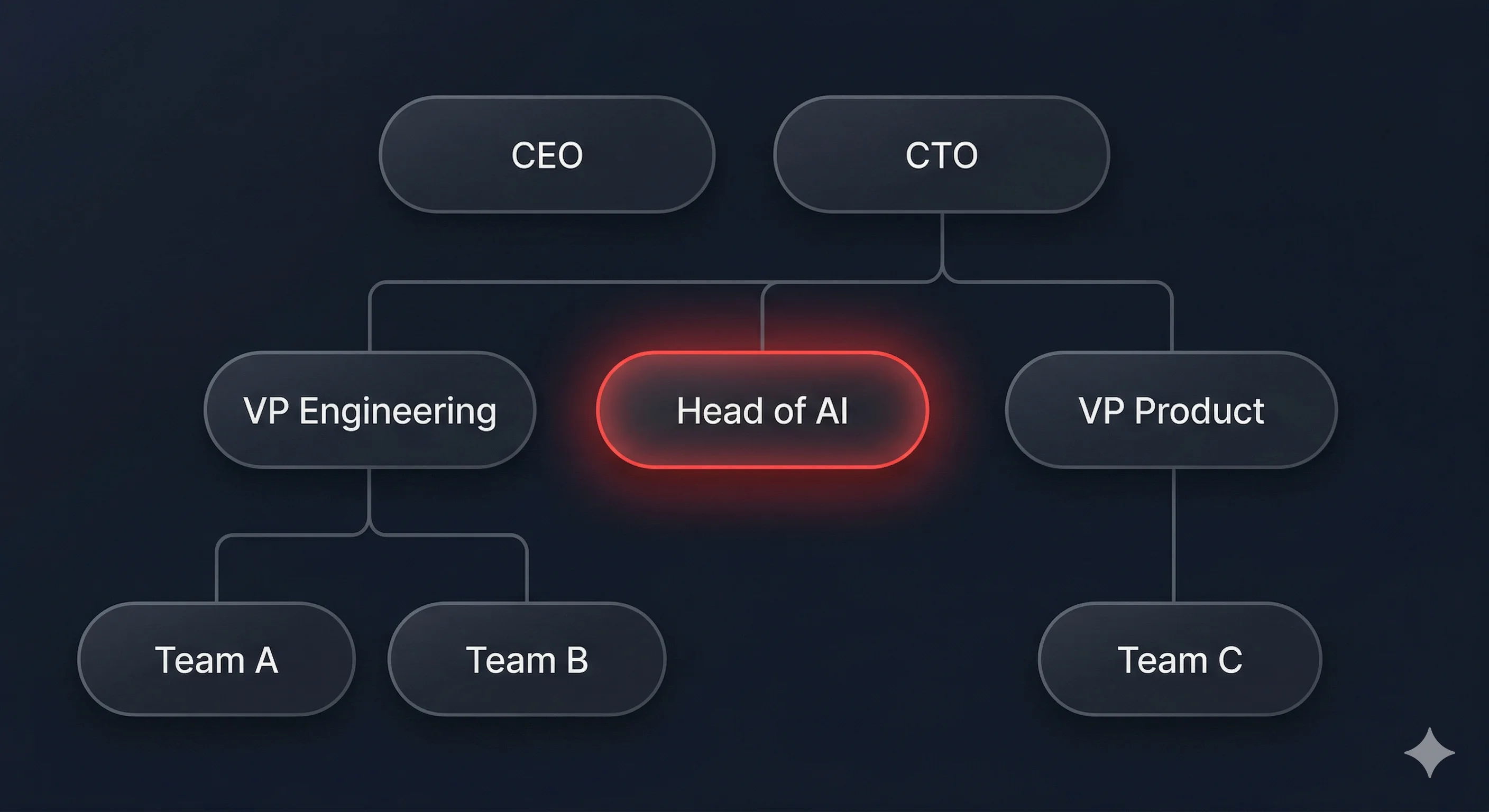

I step in as Fractional Head of AI, owning strategy, architecture, team enablement, and governance until your team can be proficient with AI on their own.

Nobody owns AI in your company. No wonder it feels like AI doesn’t deliver.

Your company invested in AI: tools, licenses, a pilot or two. Yet nobody owns the outcome. Pilots ship and nobody touches them again. AI runs as everyone’s side project. Nobody knows if any of it is actually working. The investment happened. The leadership didn’t.

No Owner, No Progress

AI is on every team’s OKRs and nobody’s actual job. Strategy is distributed, pilots ship but nobody iterates. Initiative after initiative starts strong and quietly dies.

Pilot-to-Production Gap

The proof of concept works. The scale-up never happens. "Viele sind in der Lage im Labor ganz tolle Use Cases am Laufen zu kriegen, daran scheitern die meisten." It’s not a technical problem, it’s organizational. Nobody has the authority to push AI from demo to delivery.

Leadership Is Asking. You Don’t Have an Answer Yet.

Investors want AI maturity. The CEO wants a strategy by end of quarter. You’re already stretched across hiring, architecture, and delivery, and now AI is yours too. The pressure is real. The capacity to execute it isn’t.

AI Stuck in the IT Corner

AI gets delegated to IT or engineering. They treat it as a tooling decision: licenses managed, access configured. But the business impact layer, strategy, product integration, cross-functional enablement, never gets designed. The org has AI tools. It doesn’t have AI capability.

Why this keeps happening

Four structural gaps that no amount of tooling budget will fix. Each one needs someone who owns the outcome, not another vendor, not another pilot.

No dedicated AI leadership

AI responsibility is distributed across teams that already have full-time jobs. Nobody owns the strategy, nobody prioritizes across initiatives, nobody closes the loop between "shipped" and "delivering value." "Es kein Plus-ein-Thema für irgendjemanden ist," it can’t be an add-on to someone’s existing role.

Indicators

- AI is on OKRs but nobody is accountable for outcomes

- No single person can answer "what’s our AI strategy?" in one sentence

- Initiatives start in different teams with no coordination

Pilots succeed, then die

The proof of concept worked. Technically. Then nobody scaled it because nobody had the ownership. "Das eine ist einfach etwas in Pilot zu bringen, das andere ist dann etwas von Pilot in Skalierung zu bringen, ein Jahr später wird das Tool überhaupt nicht genutzt, obwohl das eine gute Idee war."

Indicators

- You have a pilot from 6+ months ago that nobody touches

- The team that built it moved on to other priorities

- Nobody measures whether the pilot delivered value

No enablement, no adoption

Leadership said "use AI." Nobody said how. No training program, no shared standards, no proven workflows, just "go figure it out." Engineers who tried six months ago had a bad experience and stopped. Others never started. "The gap between what these tools can do and what most teams are getting from them is huge, and growing."

Indicators

- No formal AI onboarding or training program

- Engineers use AI inconsistently, some all-in, most not at all

- No shared standards for what "good AI usage" looks like

- Early bad experiences killed motivation and nobody tried again

No iteration loop, no measurement

Features ship and nobody asks whether they worked. No evaluation, no monitoring, no feedback loop between what shipped and what users actually need. "Du fragst nach sechs Monaten, woher wissen wir jetzt, ob wirklich besser geworden sind? Außer vielleicht so eine gefühlte Wahrheit."

Indicators

- No metrics for AI feature performance

- AI features launched reactively for sales, not measured after

- "Felt truth" instead of data, the team believes AI is helping but can’t prove it

How it works

From first call to your org owning AI independently.

Intro call

We talk for 30 minutes. I understand where your org stands with AI: what’s running, what stalled, what’s missing. You’ll know by the end whether this is the right fit.

Assessment and strategy

I assess your AI maturity: team capabilities, data foundation, tooling, knowledge gaps. You get a written strategy brief with prioritized 90-day action plan and AI transformation OKRs. This is where most companies discover the real problem isn’t the tech.

Embedded AI leadership

I join your org as Head of AI, owning the responsibility end-to-end. That means defining the strategy, rolling out tooling standards and enablement per team, building evaluation and monitoring pipelines, and measuring what actually works. I stay until your team can sustain it without me. Typical engagement: 3–9 months.

Choose Your Path Forward

From a focused assessment to embedded AI leadership, pick the engagement that matches where you are.

Get Clarity

A focused sprint to understand where you stand and where to focus first.

- Current-state AI assessment: tooling, pilots, workflows, gaps

- Team AI literacy and knowledge gap analysis

- Data foundation assessment, readiness, quality, accessibility for AI use cases

- Prioritized 90-day action plan with written strategy brief

Build Capability

Everything in Get Clarity, plus: I join your team as Head of AI, owning the full picture for one team.

- AI strategy and transformation OKR definition for your team

- AI-ready infrastructure: documentation standards, context engineering, feedback loops

- Structured AI enablement: tooling standards, workflow templates, adoption rollout

- AI monitoring and measurement: evaluation pipelines, adoption tracking, ROI reporting

- Vendor evaluation and governance framework

Stay Sharp

Everything in Build Capability, scaled across your entire org. Plus ongoing support after I leave.

- Org-wide AI enablement rollout across all teams

- Cross-functional AI coordination and prioritization

- Help defining the full-time AI leadership role and evaluating candidates

- Knowledge transfer documentation and team handover

- Monthly advisory calls and async support during the transition

What Clients Say

“Viktor has been helping us to adopt AI in simpleclub. He ran workshops for the team on how to use Claude Code, which turned out to be super useful and helped my team deliver good results faster. He also ran a system-wide initiative to cover code of our services with AGENTS.md files in simpleclub. After the initiative, we experienced a huge improvement in quality of the AI-generated code.”

Mateusz Prusaczyk

Lead Engineer @ simpleclub & author of softwarephilosopher blog

Built on Real AI Leadership

Viktor Malyi

8 years in machine learning. I built the AI platform team at Europe’s biggest EdTech scaleup from scratch.

5 production AI systems, evaluation and monitoring pipelines, autonomous AI agents. That’s the engineering side. AI transformation OKRs, tooling standards rolled out per engineer, adoption measurement and reporting. That’s the leadership side. I owned both for 3 years. I know what breaks when AI is everyone’s side project and what changes when someone owns it. Now I do that for companies that can’t wait 12 months to hire.

FAQ

AI delivers when someone owns it. Let’s talk.

30-minute intro call. No commitment. You’ll know by the end whether this is the right fit.